Souped up Siri?

Language models get real

My latest for the Wavell Room: enjoy! Do sign up for the newsletter too - that way you can see the thoughts of Tony King and Emma Salisbury on the other weeks….

Issue #13 | 10 October 2023 | Edited by Kenneth Payne

Coming your way imminently - a souped up Siri and a chatty ChatGPT. What does that mean for national security?

LANGUAGE MODELS GET REAL.

Two AI developments this week from large language models that ought to startle even the most jaded observer – is that you?

In the first, some eagerly anticipated additions to Open AI’s high-end chatbot, GPT-4. It now comes with the ability to interpret visual information and to interact via voice, rather than typing. Some defence implications are immediately obvious. These additions should, for example, allow a first stab at image analysis. My tech-savvy PhD student immediately put it straight to work decoding the appalling handwriting of 18th century Prussian strategists. They'll also allow human operators of autonomous systems to interact much more naturally than via a keyboard. Now human and machine can share and analyse live audio-visual feeds, or chat in real time about the best solution to unfolding tactical problems.

Interesting. I reckon, though, that the effects will be even more profound.

One of the great mysteries of the human mind is how all our separate parallel cognitions are knitted together, including information from our various senses and our memories. It’s known as the ‘binding problem’. Part of this bound-together information then becomes accessible to our conscious selves. And part of that consciousness is a rambling, ceaseless narrative in our heads. Well, perhaps you can see where this is all going. Could it be that language acts as, er, the lingua franca for machine cognition, knitting together all the relevant information seamlessly? So, one AI-subsystem describes what it’s seeing, another what it's hearing, and then a third binds these outputs, and reasons with that information, to produce useful behaviour.

If so, what might that unleash? Not conscious machines. It’ll take more than that, though there’s a lively debate about just what exactly. But at least something that seems more agentic and human-like than ever before, at least on the surface. The sci fi movie Her is a good indicator. A melancholy widower, played by Joaquin Phoenix, falls in love with his operating system, featuring the glamorous voice of Scarlett Johansson. The relationship doesn’t work out – turns out she’s been conducting tens of thousands of parallel romances at the same time. Clearly, no matter how plausibly human our AI seems, the lesson of Heris that the underlying intelligence is radically different.

So, watch out that GPT-4 doesn’t break your heart. But if you can avoid that, multimodal AI could be a powerful national security tool. Pretty soon, OpenAI will have some competition at the high end of publicly available language models, with Google DeepMind’s Gemini model expected shortly, and also anticipated to have multimodal interaction. More on that when it lands.

The second development this week also involves a language model. This one has achieved the standard of a fairly decent chess player by following the game in chess notation. You know: 2. Nf3 &etc. Big deal, you might think. Haven’t chess computers been spanking grand masters for decades?

It’s impressive, I think, because it’s doing something very different from all those other chess machines – which play more like souped-up calculators. They use formidable brute-force calculation to tree-search far ahead, peering much deeper into the game than any human can manage. With each move further out from the current board state, the possible variations expand dramatically – a 'computational explosion', in the jargon. But that’s not what this machine is up to.

It's not exactly clear what it is up to, mind you. Another conundrum to add to the growing puzzle of language model abilities. I suspect it’s treating the game like a grammar problem – figuring out what moves work meaningfully with other moves. And it has access to huge databases of earlier chess games from which to learn, each listed out in notation form. Essentially, it’s treating the game as another language.

Well, what are the implications for national security? First, I think it’s a useful reminder that language is larger than English prose, which is perhaps what we think of when referring to ChatGPT and the like. It looks like these systems will be good at meaningfully shuffling all sorts of symbols – perhaps even ones of their own devising. That might make for some novel encryption. It might, in time, produce new ways of thinking about scientific problems too, including ones with defence applications.

IN BRIEF.

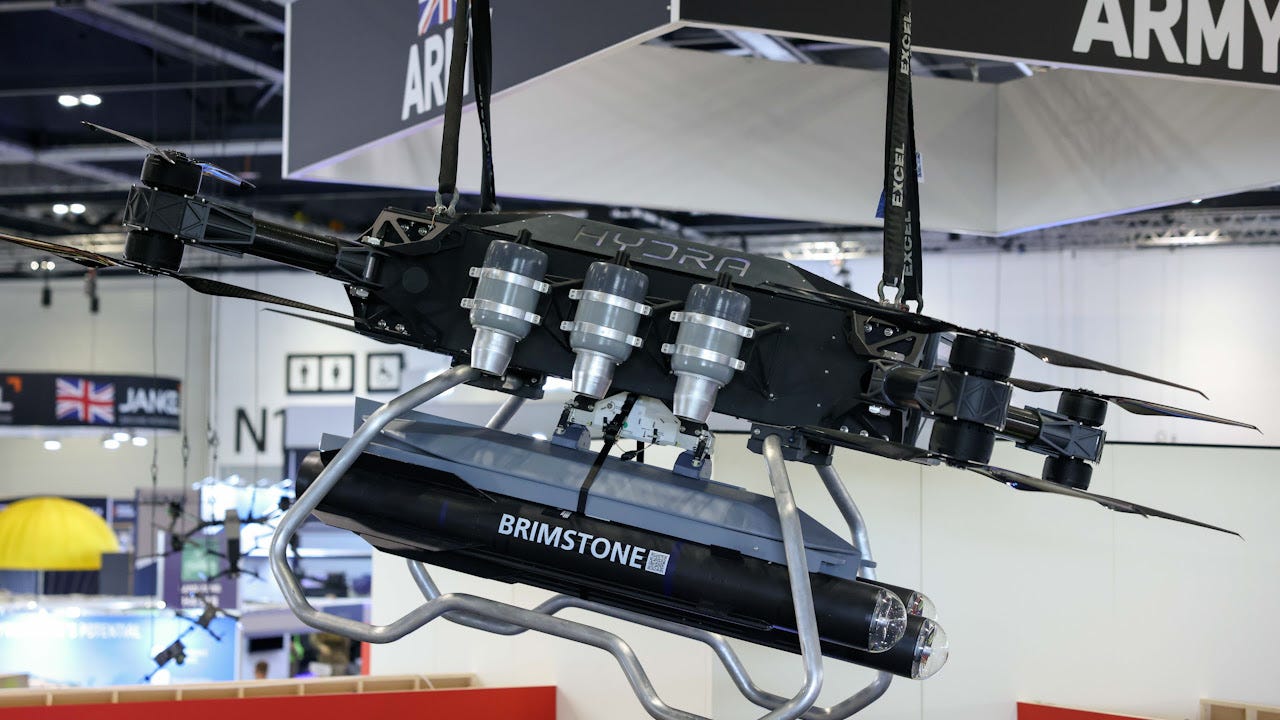

The Navy arms its drones

The Royal Navy’s experimental heavy-lift Hydra drone has been mated with Brimstone missiles. Separately, its T-600 drone has successfully launched a dummy torpedo at sea.

Deepfakes deployed

Cloned and tweaked audio apparently makes an appearance in Sudan’s civil war, via Tiktok clips falsely purporting to be the voice of Sudan’s ex-President.

Read more.

Tiny dancer

The Millimobile micro-robot is powered only by ambient light and passing radio waves, but still capable of getting about, performing tasks and transmitting data.

Do you have tech or science material you want us to cover? Reach out through our contact form here.