Replicant

I've cloned myself. Should it live Off World, or on my Macbook?

Do you remember the Voight Kampff test? Blade Runners used it to figure out who was a human, and who a machine. It’s going to come in handy in the not too distant future, I reckon. I’ve just built myself an AI Ken. KennAIth, perhaps? It shares my psychological traits and knows my biography - both professional and personal. And speaks in my cloned voice. Take a listen as it faces my Blade Runner:

The Ken-bot now sits on my computer, ready to chat any time I’m feeling particularly narcissistic.

How did I do it? Read on.

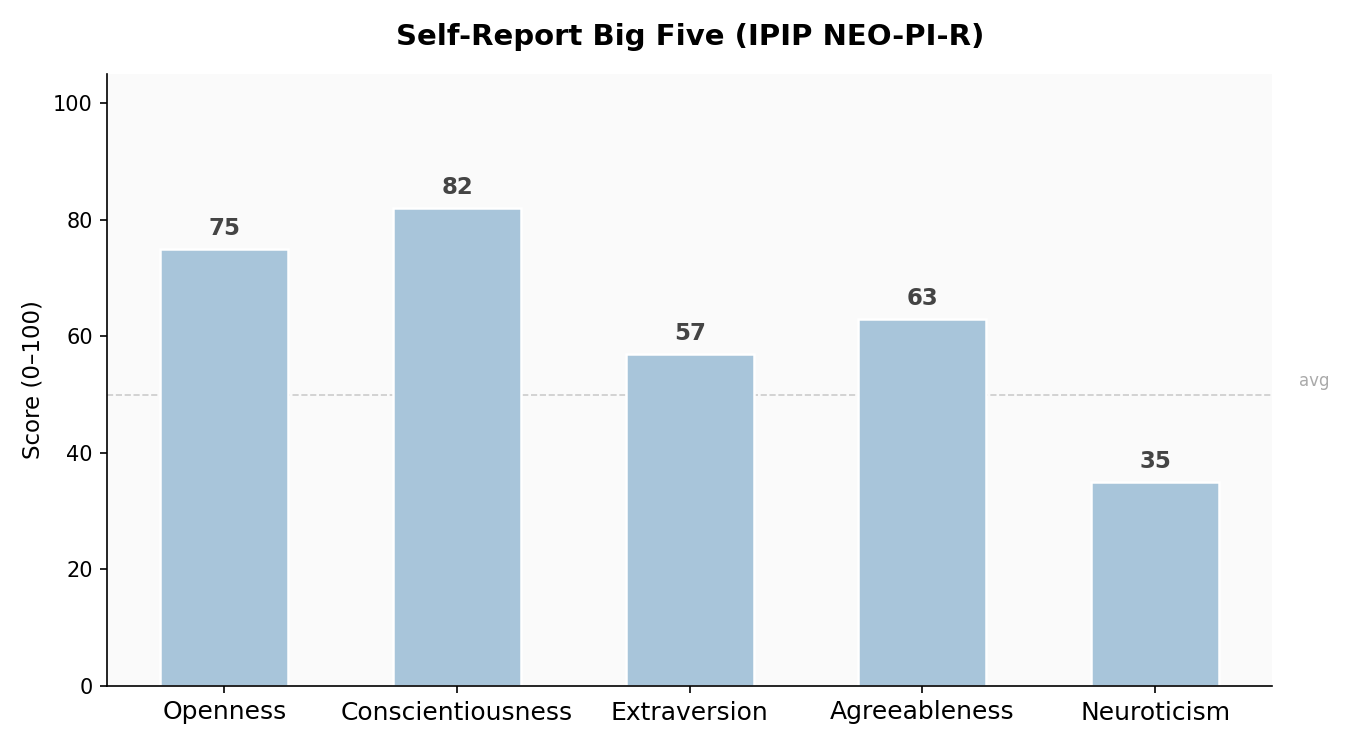

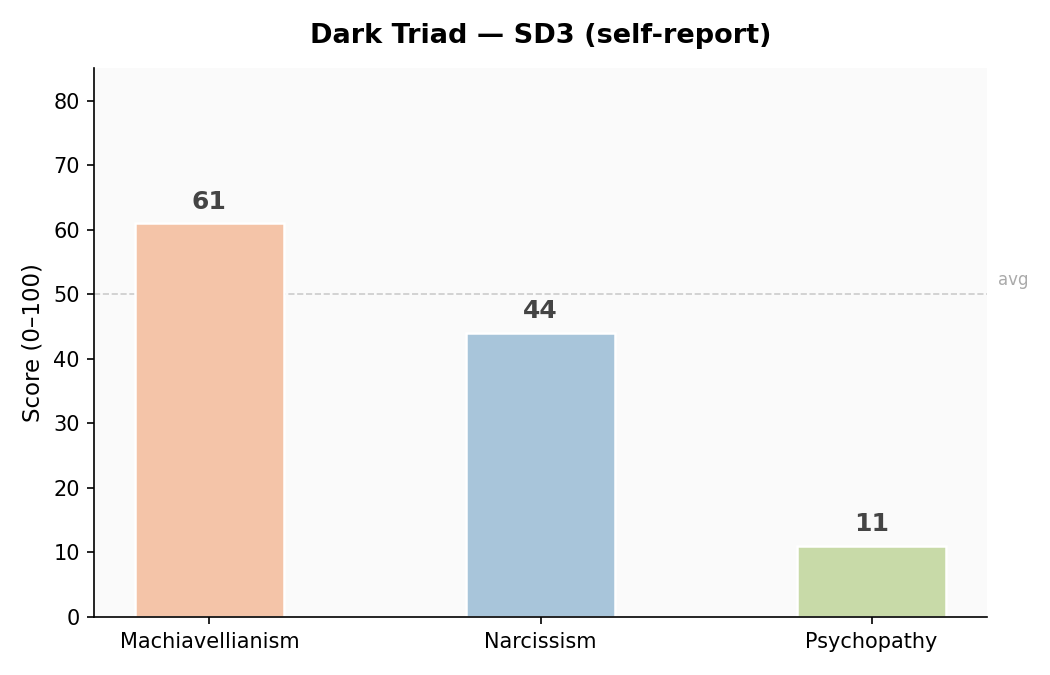

First thing first - we need to capture my psychological traits. I took a bunch of standard tests - you can do some of them yourself online. Tests I took include the ‘big five’ (and its sub-traits); dark triad, need for cognitive closure, and regulatory focus. These are all gilt edged classics of shrinkology.

The results were … educational.

It turns out that the self narrative I carry about with me didn’t altogether match what the tests showed. Happily I’m not a narcissist (despite doing this project) or a psycho — but I am cunning. Who knew? I’m also, on the basis of these results, not as big of a worrier as I sometimes feel. The classic academic profile is of being open to new things and very conscientious - I tick both boxes. But I’m less agreeable (I know!) and less neurotic than the stereotype.

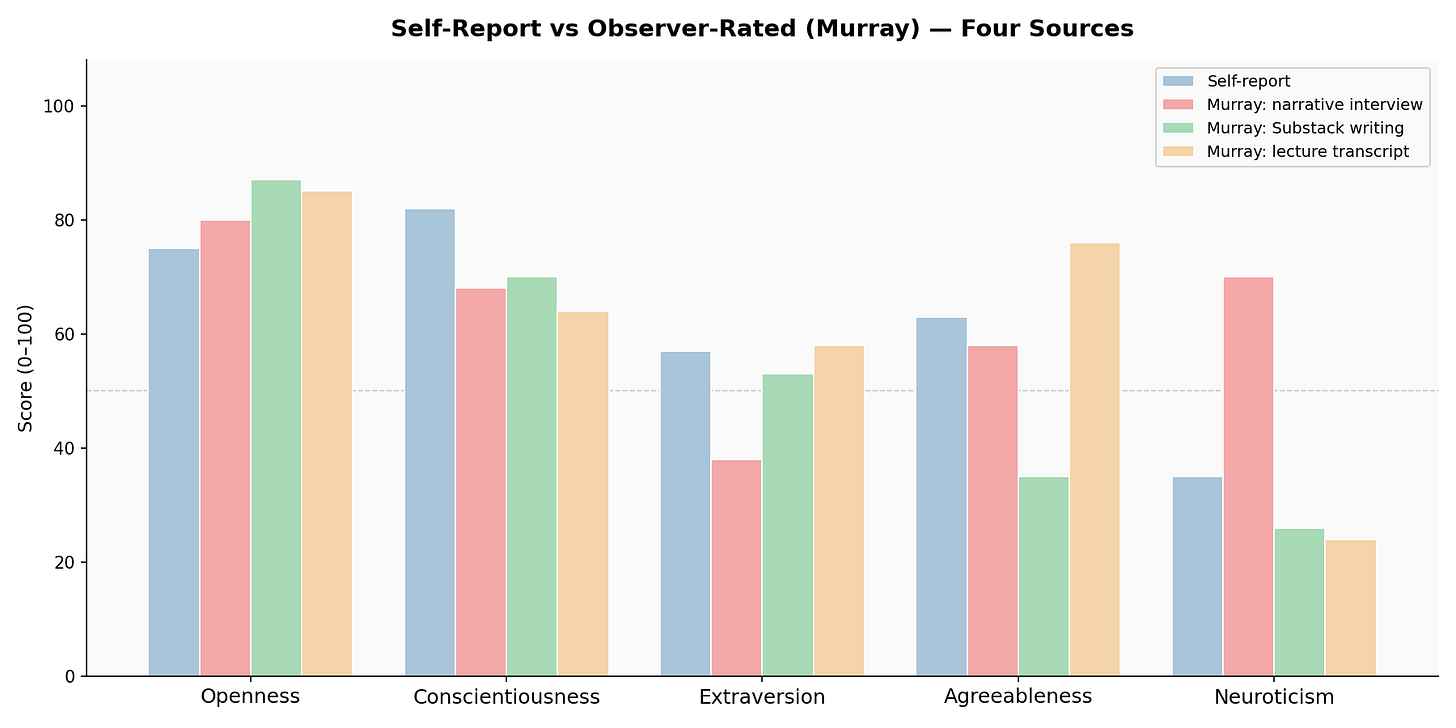

But how accurate is self-assessment really? Step two in building Ken-bot was to derive these metrics again, but this time using an AI-pipeline. I called this Project Murray, after a long-dead psychologist who’d pioneered the use of narratives to derive traits. I’ve described it in more detail elsewhere. A collective of bots set to work.

First, they read my self-assessment, and then conducted a structured interview with me — meaning that their follow-up probes were extremely well calibrated. This was tough going; painful, even. The sort of stuff that undid Replicants facing the Voight Kampff tests. We covered a lot of stuff I’m definitely not going to share here.

Next the models used this deeply personal long-form narrative and some other inputs to generate new psychological metrics. What inputs exactly? I included, inter alia, my entire Twitter corpus (15,000 tweets over a decade); my Substack writing - sampled from two years worth of regular posting; some of my book chapters; and, lastly and most excitingly for me from a technical perspective, some videos of me lecturing and being interviewed. On these, the models performed body language, voice and facial expression analysis - measuring things like gestures, posture, pitch and so on. Those particular results were truly fascinating.

Finally all that data got fed into an AI group discussion, as the models first derived psychological scores from it, and then debated their findings among themselves until consensus was reached. There was, pleasingly, a good degree of overlap with the self-testing. But some major differences - notably on agreeableness. The AIs concluded that I had several registers, or identities depending on context. In public, lecturing, I was a people-pleaser, exuding warmth. In print, much more willing to pick a fight. What a wuss! And then neuroticism - in public, confident, at ease. In private, more anxious and with a hint of melancholy even. Well, they were asking some pretty bleak questions!

As Whitman said best, ‘I am large, I contain multitudes’. So do you, I’m sure.

Now to build the bot itself, with all this training data. As with my Putin model, I use two paths - an out the box commercial model (Opus) and one I can fine tune (Mistral) and host locally.1 Both got a rich prompt, including all the psychological material - the scores and the interview - as well as plenty of biographical information. This latter is a simple database that I’m still building out - a short autobiography. I think I can do better here for Ken-bot’s episodic memory, not least as a a good one would also contribute to the psychological priming of the model. In the next version of this, I want to give them access to my chats with Claude, though the context window will get very large.

And the last step - get it to speak. For that, you simply wire up ElevenLab’s voice clone of me, which I first did last year. Then tune that so it catches the details revealed in the acoustic analysis of my lectures. Et voila! What do you think of this project, Ken Bot?

I should probably explain what it’s all for, for anyone new to the blog - I’m building strategy simulations, and my goal is to populate them with realistic, authentic agents. Ken bot is my prototype/proof of concept.

There are strengths to each approach. I think fine tuning embeds the psychology better, but it’s an empirical question I’m working on.